思路

- 爬取页面信息,存储详情页链接

- 爬取详情页链接

- 爬取详情页图片

- 将数据保存到mysql

- 要点

- 商品列表页爬取-商品链接,商品价格,商品名称,图片链接,评论链接,ASIN----需要登录或者修改配送地址(登录执行1次)。本来一开始是可以通过selenium点击地址配送修改的,但后来发现修改邮编也不让通过,一定要登录才能修改地址(Amazon不同地址显示的商品信息是不一样的)。登录还要进去自己的邮箱验证通过,所以这里

- 爬取商品链接,需要变体COLOR,SIZE,商店名称,detail

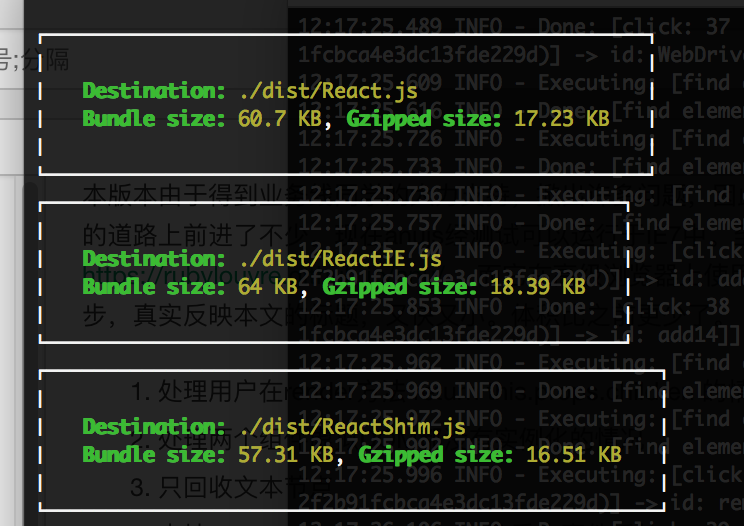

- 1个driver爬取页面

- 5个driver爬取详情页

- request下载图片

导入模块

from selenium import webdriver

from selenium.webdriver.support.ui import Select

from selenium.common.exceptions import NoSuchElementException

# from selenium.webdriver.support import expected_conditions as EC

# from selenium.webdriver.common.by import By

# from selenium.webdriver.support.ui import WebDriverWait

import threading, requests

import queue,re, pymysql

options = webdriver.ChromeOptions()

options.add_argument("user-agent='Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/89.0.4389.90 Safari/537.36'")

options.add_argument("--lang=en-US")

prefs = {"profile.managed_default_content_settings.images": 2, 'permissions.default.stylesheet': 2}

options.add_experimental_option("prefs", prefs)

executable_path = r"C:\Users\AppData\Local\Google\Chrome\Application\chromedriver.exe"

商品页面数据提取---一大堆try,except....(大可不必,但亚马逊的xpath感觉会因为访问次数增多发生变化)

之所以不在详情页提取,是因为有些信息商品页面有,但详情页可能没有。比如这个最重要的商品价格,按理说详情页一定会显示价格,但偏偏就是没有....下面代码如果元素定位错误频繁出现错误,就应该手动停止爬虫了,重新找xpath

def parse_list(item_box,cursor):

try:

item_url = item_box.find_element_by_xpath(

'.//div[@class = "a-section a-spacing-none a-spacing-top-small"]//h2/a').get_attribute("href")

item_asin = re.findall('dp.*?(B.*?)[%/]', item_url)

except:

print("元素定位错误")

item_url, item_asin = None, None

try:

img_url = item_box.find_element_by_xpath(

'.//div[@class = "a-section a-spacing-none s-image-overlay-black"]//img').get_attribute("src")

except:

img_url = None

print("img_url定位错误")

try:

store_name = item_box.find_element_by_xpath(

'.//div[@class = "a-section a-spacing-none a-spacing-top-small"]//h5/span').text

except:

store_name = None

try:

title = item_box.find_element_by_xpath(

'.//div[@class = "a-section a-spacing-none a-spacing-top-small"]//h2//span').text

except:

title = None

print("title定位错误")

try:

item_star = item_box.find_element_by_xpath(

'.//div[@class = "a-row a-size-small"]/span[1]').get_attribute("aria-label")

except:

item_star = None

try:

item_count = item_box.find_element_by_xpath(

'.//div[@class = "a-row a-size-small"]/span[2]').get_attribute("aria-label")

except:

item_count = None

try:

review_url = item_box.find_element_by_xpath(

'.//div[@class = "a-row a-size-small"]/span[2]/a').get_attribute("href")

except:

review_url = None

print("???有count无评论链接???")

try:

item_price = item_box.find_element_by_xpath('.//span[@class = "a-offscreen"]').get_attribute("innerHTML")

except:

item_price = None

# 有item_url的情况下,才能数据写入数据库

if item_url:

sql = """

insert into item_list1(

item_url,item_asin,img_url,store_name,title,item_star,item_count,review_url,item_price

)values (%s,%s,%s,%s,%s,%s,%s,%s,%s);

"""

cursor.execute(sql, (

item_url, item_asin, img_url, store_name, title, item_star, item_count, review_url, item_price))

return item_url, item_asin, img_url详情页面数据提取

def item_parse(item_asin, item_url, driver):

try:

price = driver.find_element_by_class_name("a-lineitem").text

except:

price = None

try:

sku_list = driver.find_elements_by_xpath("//div[@id='variation_color_name']/ul/li")

color = str([i.get_attribute("title") for i in sku_list])

except:

color = None

try:

size_select = Select(driver.find_element_by_id("native_dropdown_selected_size_name"))

size = str([m.text for m in size_select.options])

except:

size = None

try:

details = driver.find_element_by_id("detailBulletsWrapper_feature_div").text

except NoSuchElementException:

try:

details = driver.find_element_by_id("prodDetails").text

except:

details = None

if item_url:

cursor, db = mysql_connect()

sql = """insert into item_list2(item_url,item_asin,price,color,size,details)values (%s,%s,%s,%s,%s,%s);"""

cursor.execute(sql, (item_url, item_asin, price, color, size, details))

db.commit()MySql连接

def mysql_connect():

db = pymysql.connect(

host="127.0.0.1",

user="root",

password="xiaoqmysql",

database="item_list",

port=3306

)

cursor = db.cursor()

return cursor, db生产者消费者模型开启多线程爬取数据

生产者--爬取某个类目下所有页面

class ProductList(threading.Thread):

def __init__(self, page_queue, image_queue, item_queue, driver, *args, **kwargs):

super(ProductList, self).__init__(*args, **kwargs)

self.page_queue = page_queue

self.image_queue = image_queue

self.item_queue = item_queue

self.driver = driver

def run(self) -> None:

while not self.page_queue.empty():

page_url = self.page_queue.get()

self.driver.get(page_url)

# WebDriverWait(self.driver, 5).until(

# EC.presence_of_element_located((By.CLASS_NAME, "a-section a-spacing-medium a-text-center"))

# )

item_boxes = self.driver.find_elements_by_xpath(

'//div[@class = "a-section a-spacing-medium a-text-center"]')

cursor, db = mysql_connect()

for item_box in item_boxes:

try:

item_url, item_asin, img_url = parse_list(item_box,cursor)

db.commit()

self.image_queue.put({"item_asin": item_asin, "img_url": img_url})

self.item_queue.put({"item_asin": item_asin, "item_url": item_url})

except:

pass

print("%s线程已完成执行" % (threading.current_thread().name))详情页信息爬取

class item_info(threading.Thread):

def __init__(self, item_queue, driver, *args, **kwargs):

super(item_info, self).__init__(*args, **kwargs)

self.item_queue = item_queue

self.driver = driver

def run(self):

while True:

try:

item_obj = self.item_queue.get(timeout=60)

item_asin = item_obj.get("item_asin")

item_url = item_obj.get("item_url")

try:

self.driver.get(item_url)

item_parse(item_asin, item_url, self.driver)

except Exception as e:

print(e, item_url)

except:

break图片爬取

class image_get(threading.Thread):

def __init__(self, image_queue, *args, **kwargs):

super(image_get, self).__init__(*args, **kwargs)

self.image_queue = image_queue

while True:

image_obj = self.image_queue.get(timeout=60)

image_url = image_obj.get("image_url")

item_asin = image_obj.get("item_asin")

try:

image = requests.get(image_url)

with open(r'D:\amazon_new\images\{}.jpg'.format(item_asin), 'wb') as fp:

fp.write(image.content)

except Exception as e:

print(e, image_url)启动爬虫(这里图片就不爬了,有需要再启动)

def main():

page_queue = queue.Queue(500)

image_queue = queue.Queue(100000)

item_queue = queue.Queue(2000000)

for i in range(2, 200):

url = 'https://www.amazon.com/s?i=fashion-womens-clothing&rh=n%3A2368343011&fs=true&page={}&qid=1616319102'.format(

i)

page_queue.put(url)

for x in range(2):

driver = webdriver.Chrome(executable_path=executable_path, options=options)

driver.get("https://amazon.com")

print(input("是否登录完成?"))

th1 = ProductList(page_queue, image_queue, item_queue, driver, name="页面爬取浏览器%d号" % x)

print(th1.name)

th1.start()

for y in range(5):

driver = webdriver.Chrome(executable_path=executable_path, options=options)

th2 = item_info(item_queue, driver, name="详情页爬取%d号" % y)

th2.start()

if __name__ == '__main__':

main()

![[18/11/7] Java的基础概念](https://img2018.cnblogs.com/blog/1531287/201811/1531287-20181107195054606-885531091.png)